According to Soderstrom and Bjork, latent learning refers to “learning that occurs in the absence of any obvious reinforcement or noticeable behavioural changes” (Soderstrom and Bjork, 2015 p177). Most often associated with the work of Edward Tolman in the 1930s, latent learning is viewed as hidden (or behaviourally silent) because it is only when reinforcement of some kind is involved that it is revealed. Latent learning, therefore, has obvious implications in terms of learning versus performance, particularly if we ascribe to the notion that learning must involve some kind of relatively permanent long-term change. For example, if I were to learn a simple tune on guitar today yet was unable to repeat that tomorrow, any change that occurred yesterday would have only been temporary. In the language of cognitive psychology, my ability to play that specific tune wouldn’t have represented a relatively permanent change in long-term memory, creating instead the illusion of learning. Now, you might not agree with this definition of learning, but it’s hard to deny that if I were able demonstrate something I had learned today but was unable to demonstrate it tomorrow, or next week, it becomes difficult to claim that I have learned anything at all.

Or, as Soderstrom and Bjork put it:

The primary goal of instruction should be to facilitate long-term learning—that is, to create relatively permanent changes in comprehension, understanding, and skills of the types that will support long-term retention and transfer. During the instruction or training process, however, what we can observe and measure is performance, which is often an unreliable index of whether the relatively long-term changes that constitute learning have taken place.

From salivating dogs to rats in mazes

The notion of latent learning was, initially at least, a controversial one because it was assumed at the time that learning couldn’t take place without some kind of reinforcement. Furthermore, learning itself cannot be seen and can only be evidenced through behaviour. Therefore, to fully appreciate the importance of latent learning, we first need to examine a school of thought that dominated psychology during the first half of the twentieth century: behaviourism. Behaviourists argue that learning occurs as the result of conditioning. They reject the use of introspection and anything that cannot be directly observed, including cognitive processes such as memory.

This notion has its roots in the work of Russian physiologist Ivan Pavlov who chanced upon a curious form of learned response while investigating salivary secretions in dogs. Pavlov found that salivations not only increased when the dogs knew they were about to be fed, they also salivated when they saw the trappings associated with being fed. This even included the laboratory assistant charged with feeding them. Pavlov was then able to associate, say, the sound of a bell with the food, so that when the dogs heard the sound of the bell they would begin to salivate (although, contrary to popular belief, Pavlov used a metronome, not a bell). This might take several episodes of pairing the two, but eventually by eliciting a sound just before presenting the food, salivation is prompted by the sound in anticipation of the food. This kind of learning is variously referred to as stimulus-response learning, Pavlovian conditioning or classical conditioning.

B.F. Skinner, distinguished between what he termed respondent behaviour (triggered automatically by a stimulus in the environment, such as a sound) and operant behaviour, behaviours that are triggered voluntarily. The salivating dog can’t help but salivate but in other incidences animals might volunteer a certain response because they have learned that it will lead to a desirable outcome. Say, for example, we place a pigeon in a cage and at one end of the cage place a button that when pecked will release a food pellet. The pigeon will most likely randomly peck at different areas of the cage (because that’s what pigeons do). Eventually, however, it will accidentally peck the button and release some food into a tray. At this point the bird hasn’t associated the pecking with the button or the food so it just continues to randomly peck. The more the pigeon pecks the button and receives food, the stronger the connection will become until the bird learns that pecking the button will guarantee food. The pigeon is motivated by a reward (the food). Now, if the bird flaps its wings and the mischievous researcher releases food into the tray, the pigeon may well eventually associate the flapping of wings with the delivery of food. Skinner called this phenomenon pigeon superstition, although it doesn’t only apply to pigeons.

Like the pigeon who accidentally chanced upon a means to obtain food, Edward Thorndike viewed this type of learning as a process of trial and error. He built puzzle boxes for use with cats whereby the task was to operate a latch that would open the door (see below). When the cat managed to free itself it would be rewarded with a piece of fish. Initially, the cat would behave randomly while attempting to escape the box but would eventually, and purely by chance, manage to work the latch and escape before helping itself to the fish. Thorndike would then place the cat back in the box and the process would repeat. Each time the process was repeated the cat became more adept at escaping; a combination of time and repetition strengthened the behaviour that was needed for the desired outcome – escape. In one particular instance, a cat took around five minutes to escape in the first trial but after around twenty trials this had been reduced to about five seconds. This process became known as Thorndike’s law of effect and was crucial in distinguishing classical from operant conditioning.

(Almost) Cognitive Explanations

The main problem with behaviourist explanations, however, is that with the desire to emphasise what can be observed, they exclude internal thought processes as well as the possibility that learning might well occur in the absence of motivation or reinforcement. Are humans simple stimulus-response machines or are they highly complex decision makers? Edward Tolman was conducting his own studies on learning in rats at the same time as the behaviourists, so he is often thought of as behaviourist himself. However, in retrospect, Tolman could easily be described as one of the first cognitive psychologists, mainly due to his research implying that reinforcement wasn’t always necessary for learning to take place and that learning was often the result of the creation of internal cognitive maps.

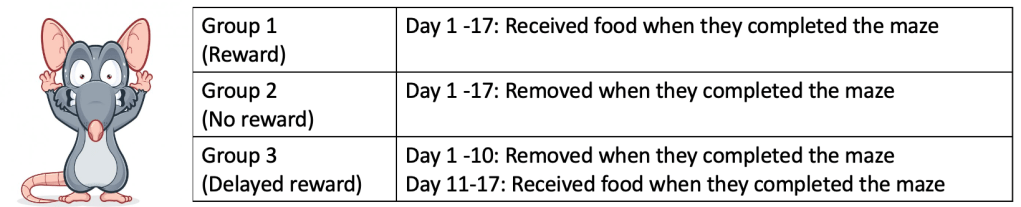

Tolman and Honzik (1930) had rats attempt a maze. In one condition the rats were reinforced every time they found their way through a maze to a food box. In a second condition the rats received no reinforcement. A third group of rats received no reinforcement for the first ten days but did receive reinforcement after the eleventh. Results found that the first group learned the maze quickly and made very few errors, while the second group (the no reinforcement condition) never managed to reduce the time it took to complete the maze and tended to move around with very little aim. The third group, however, made no apparent progress during the first ten days, but then displayed a sudden decrease in the time it took to find the food on day eleven, catching up almost immediately with the first group.

Although it wasn’t observable, the rats in group 3 had been learning their way through the maze during the first ten days, but the learning was latent. The learning itself wasn’t observable until day 11 when they were given an incentive. Tolman concluded that reinforcement was important in relation to the performance of the learned behaviour but not necessarily for learning itself. This is often referred to as place (or sign) learning in that the rats learn expectations as to what part of the maze will be followed by which other part of the maze. These ‘expectations’ Tolman called cognitive (or mental) maps. We can think of these as simple perceptual maps of the maze and the spatial relationships between certain markers.

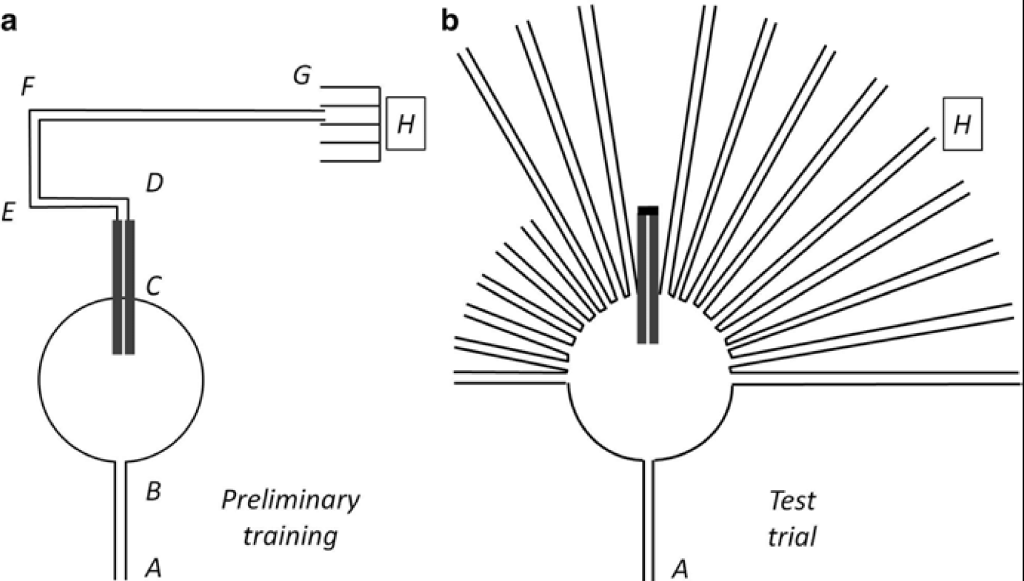

The rats in Tolman’s studies were also able to adapt to a changing environment. When their usual route was blocked, they managed to find alternative ones and shortcuts, while if the maze was rotated they were still able to find the food from different starting locations. For example, in another one of Tolman’s experiments, rats were expected to complete a simple maze to receive food (labelled ‘a’ in the diagram below). Once the rats had learned the maze it was replaced with a more complex version (‘b’) with paths spreading out in several directions but with the original route (from the first part of the study) blocked. The rats managed to complete the maze despite these changes, simply taking the route they deemed best represented that of the original maze (see figure). Furthermore, In yet another study, researchers flooded the maze immediately after the rats had learned it and the rats would swim to the food with no more errors than when they had walked (Restle, 1957).

But rats aren’t human beings and these studies may not be applicable to people. Stevenson did, however, examine latent learning in humans (Stevenson, 1954). Stevenson had children (some as young as three) explore a series of objects in an attempt to locate a key that would open a box. However, the environment also contained non-key objects and items that were irrelevant to the task. The question under investigation was: would the children learn the locations of the unrelated items during their search for the relevant ones? In other words, would the children display latent learning? The short answer is yes; when the children were asked to find the irrelevant, non-key items they were relatively faster in doing so when the objects were contained in the explored environment. The researchers also noted that latent learning increased with age.

Latent learning in the real-world

How would latent learning work in the real world? Say, for example, we have moved to a new town and each morning we catch the bus into work and return in the evening. On one particularly pleasant morning we decide to walk to work rather than taking the bus. Now, although we haven’t specifically learned the route, our bus journeys have allowed us to create an internal map, along with geographical markers along the way. We have learned the route latently and it’s only when we need to use the information that the learning can be evidenced through our behaviour. The reinforcement (or reward, if you like) is goal directed, that is, reaching our destination on time. We didn’t consciously learn the route while sitting on the bus day after day, but we did learn it, we just didn’t know it until that learning needed to be evidenced.

More recently, Wang and Hayden have suggested a connection between latent learning and curiosity. In their 2021 paper they suggest that curiosity may motivate latent learners. “While latent learning is often treated as a passive or incidental process, it normally reflects a strong evolved pressure to actively seek large amounts of information. That information in turn allows curious decision makers to represent the structure of their environment, that is, to form cognitive maps”, they propose.

Leave a comment